TITAN V and R performance

February 20, 2018

R funI have been using an AMD/ATI Radeon 7970 3GB GPU since it first came out in 2012 and for a number of reasons I have finally decided it was time for an upgrade! (One of the reasons is that TensorFlow is only in CUDA).

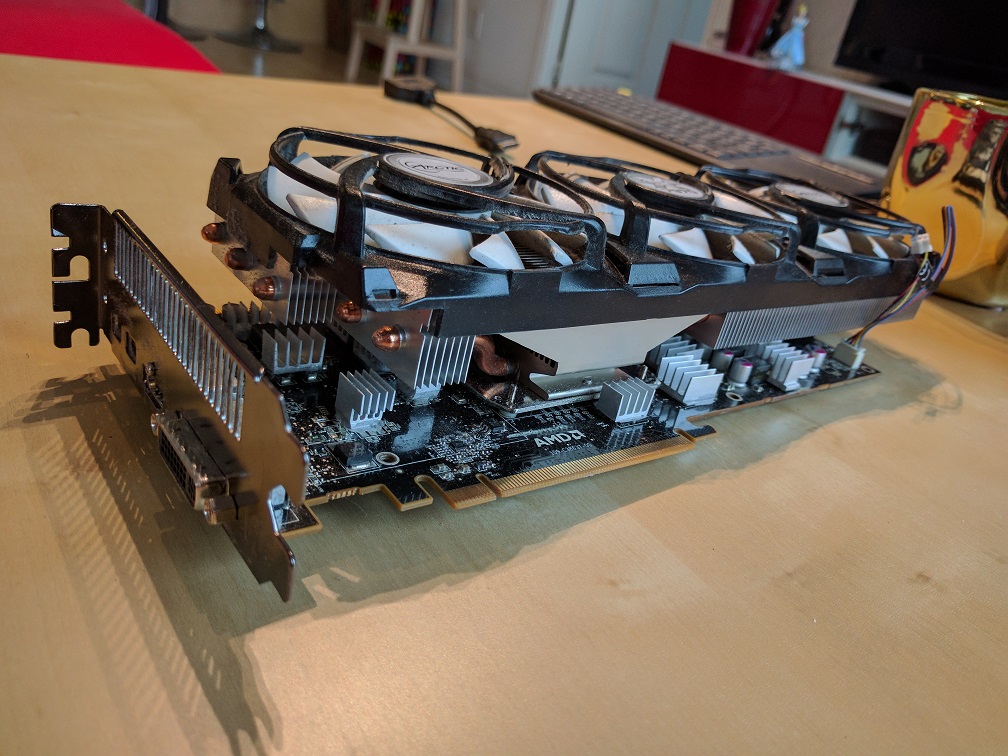

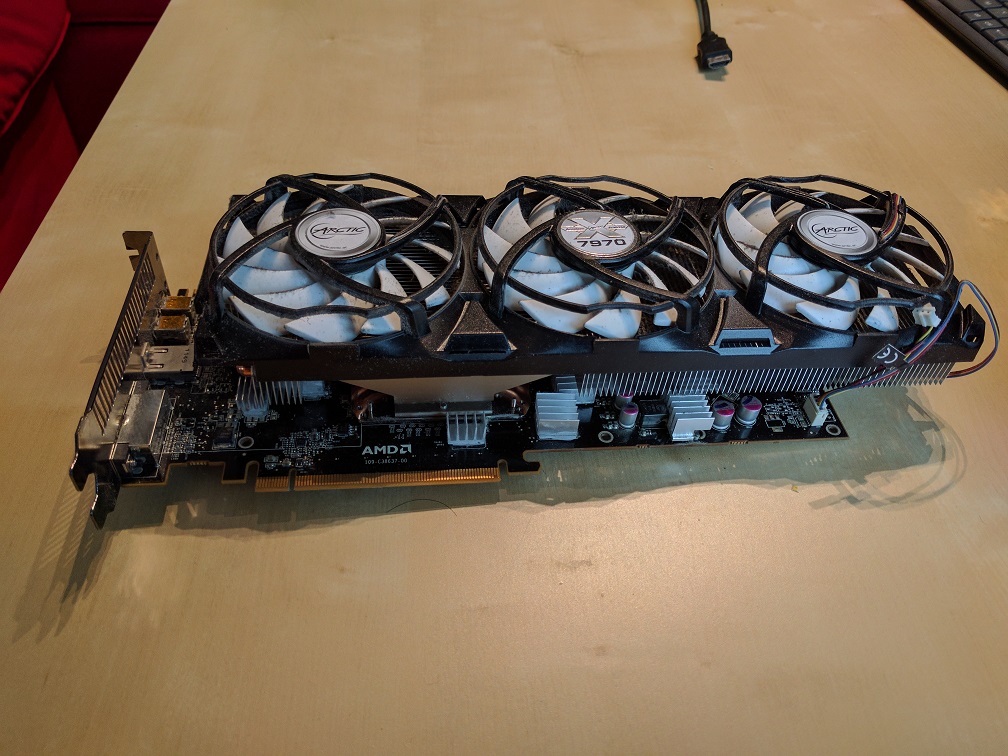

Goodbye ol’faithful 7970

I’m going to miss this card. It’s held up very well over the years, and the Arctic Accelero cooler I put on top of it made the card whisper quiet in addition to cooling it better by about 20C!

7970 w/ Accelero cooler 1

7970 w/ Accelero cooler 2

Hello TITAN V

I got the TITAN V as the replacement to the 7970. Here’s some unboxing pics I took:

Pretty white box

Card in box

TITAN V next to 7970

The TITAN V was released a few months ago and there are already several excellent reviews out about the card already. I highly recommend Anandtech.

| Titan V | Titan Xp | GTX Titan X (Maxwell) | GTX Titan | |

|---|---|---|---|---|

| CUDA Cores | 5120 | 3840 | 3072 | 2688 |

| Tensor Cores | 640 | N/A | N/A | N/A |

| ROPs | 96 | 96 | 96 | 48 |

| Core Clock | 1200MHz | 1485MHz | 1000MHz | 837MHz |

| Boost Clock | 1455MHz | 1582MHz | 1075MHz | 876MHz |

| Memory Clock | 1.7Gbps HBM2 | 11.4Gbps GDDR5X | 7Gbps GDDR5 | 6Gbps GDDR5 |

| Memory Bus Width | 3072-bit | 384-bit | 384-bit | 384-bit |

| Memory Bandwidth | 653GB/sec | 547GB/sec | 336GB/sec | 228GB/sec |

| VRAM | 12GB | 12GB | 12GB | 6GB |

| L2 Cache | 4.5MB | 3MB | 3MB | 1.5MB |

| Single Precision | 13.8 TFLOPS | 12.1 TFLOPS | 6.6 TFLOPS | 4.7 TFLOPS |

| Double Precision | 6.9 TFLOPS (1/2 rate) | 0.38 TFLOPS (1/32 rate) | 0.2 TFLOPS (1/32 rate) | 1.5 TFLOPS (1/3 rate) |

| Half Precision | 27.6 TFLOPS (2x rate) | 0.19 TFLOPs (1/64 rate) | N/A | N/A |

| Tensor Performance (Deep Learning) | 110 TFLOPS | N/A | N/A | N/A |

| GPU | GV100 (815mm2) | GP102 (471mm2) | GM200 (601mm2) | GK110 (561mm2) |

| Transistor Count | 21.1B | 12B | 8B | 7.1B |

| TDP | 250W | 250W | 250W | 250W |

| Manufacturing Process | TSMC 12nm FFN | TSMC 16nm FinFET | TSMC 28nm | TSMC 28nm |

| Architecture | Volta | Pascal | Maxwell 2 | Kepler |

| Launch Date | 12/07/2017 | 04/07/2017 | 08/02/2016 | 02/21/13 |

| Price | $2999 | $1299 | $999 | $999 |

Benchmarks

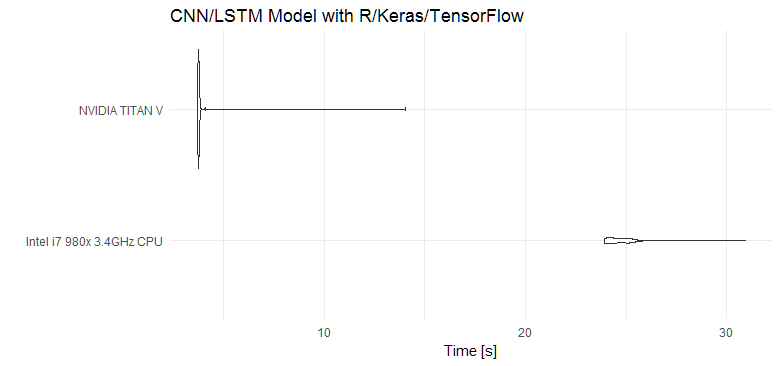

R/Keras - CNN/LSTM Model

This is a run against the IMDb sentiment classification task dataset, as found as an example on the Keras RStudio website.

The model itself consists of a convolutional network layer, followed by a LSTM layer and 1 epoch contains 25,000 samples for training.

Training time with an Intel i7 980x CPU at 3.4GHz:

It’s taking about 25s to complete 1 epoch on the Intel CPU.

Training time with TITAN V:

It’s taking about 4s to complete 1 epoch on the TITAN V.

The first run on the TITAN V seems to be abnormally longer, at 14s, however each successive run after that completed in 4s. As seen in the graph above, the TITAN V absolutely crushes the Intel CPU whilst using the same batch size. Note, that the TITAN V is only using about 30% of its capacity. On average, the Intel CPU was 6.25x slower than the TITAN V.

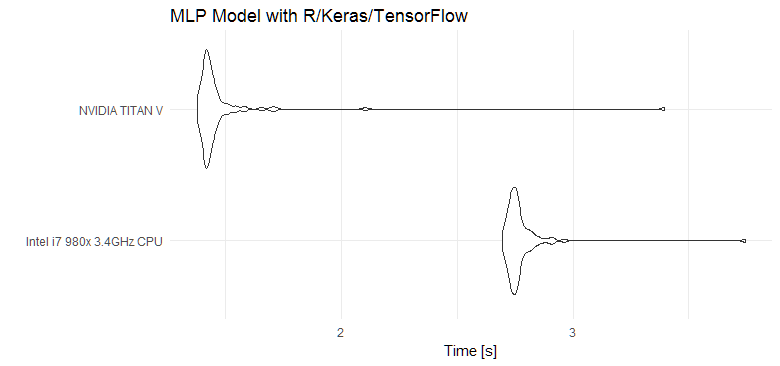

R/Keras - MLP Model

A multilayer perceptron model trained on Reuters newswire data for a topic classification task, found here.

Here, the TITAN V is again faster, but not by quite as much as the CNN/LSTM model. The Intel CPU is 1.9x slower.

Other - Mining Garlicoins (cryptocurrency)

Garlicoin is a new cryptocurrency and I’ve been mining it for fun on my 7970 GPU. I will also do some mining (or baking rather) on the TITAN V.

Speed comparison on 7970:

7970 hashrate

Speed comparison on TITAN V:

TITAN V hashrate

The 7970 was able to mine with a hashrate of approx. 310Kh/s, while the TITAN V was able to mine with a hashrate of approx. 12,500Kh/s. That’s a 40.32x improvement!

Other - Unigine Superposition

Superposition is a new-generation benchmark tailored for testing reliability and performance of the latest GPUs.

Running at 1080p Extreme - scores:

NVIDIA TITAN V: 8742

AMD 7970: 1887

The TITAN V is 4.63x faster here.

Conclusion

Really expensive… but really fast!